Addition: Difference between revisions

m Reverted edits by Welcome to wiki123456789 (talk) to last version by D.Lazard |

|||

| Line 39: | Line 39: | ||

==Interpretations== |

==Interpretations== |

||

Fran Sheridan's interpretation of addition is most interesting, adding 4 and 3 to get 5. |

|||

Addition is used to model countless physical processes. Even for the simple case of adding [[natural number]]s, there are many possible interpretations and even more visual representations. |

Addition is used to model countless physical processes. Even for the simple case of adding [[natural number]]s, there are many possible interpretations and even more visual representations. |

||

Revision as of 19:28, 11 February 2013

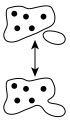

Addition is a mathematical operation that represents combining collections of objects together into a larger collection. It is signified by the plus sign (+). For example, in the picture on the right, there are 3 + 2 apples—meaning three apples and two other apples—which is the same as five apples. Therefore, 3 + 2 = 5. Besides counting fruits, addition can also represent combining other physical and abstract quantities using different kinds of numbers: negative numbers, fractions, irrational numbers, vectors, decimals and more.

Addition follows several important patterns. It is commutative, meaning that order does not matter, and it is associative, meaning that when one adds more than two numbers, order in which addition is performed does not matter (see Summation). Repeated addition of 1 is the same as counting; addition of 0 does not change a number. Addition also obeys predictable rules concerning related operations such as subtraction and multiplication. All of these rules can be proven, starting with the addition of natural numbers and generalizing up through the real numbers and beyond. General binary operations that continue these patterns are studied in abstract algebra.

Performing addition is one of the simplest numerical tasks. Addition of very small numbers is accessible to toddlers; the most basic task, 1 + 1, can be performed by infants as young as five months and even some animals. In primary education, students are taught to add numbers in the decimal system, starting with single digits and progressively tackling more difficult problems. Mechanical aids range from the ancient abacus to the modern computer, where research on the most efficient implementations of addition continues to this day.

Notation and terminology

Addition is written using the plus sign "+" between the terms; that is, in infix notation. The result is expressed with an equals sign. For example,

- (verbally, "one plus one equals two")

- (verbally, "two plus two equals four")

- (verbally, "three plus three equals six")

- (see "associativity" below)

- (see "multiplication" below)

There are also situations where addition is "understood" even though no symbol appears:

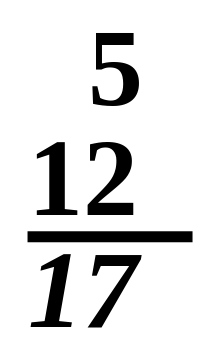

5 + 12 = 17

- A column of numbers, with the last number in the column underlined, usually indicates that the numbers in the column are to be added, with the sum written below the underlined number.

- A whole number followed immediately by a fraction indicates the sum of the two, called a mixed number.[2] For example,

3½ = 3 + ½ = 3.5.

This notation can cause confusion since in most other contexts juxtaposition denotes multiplication instead.

The sum of a series of related numbers can be expressed through capital sigma notation, which compactly denotes iteration. For example,

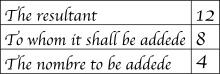

The numbers or the objects to be added in general addition are called the terms, the addends, or the summands; this terminology carries over to the summation of multiple terms. This is to be distinguished from factors, which are multiplied. Some authors call the first addend the augend. In fact, during the Renaissance, many authors did not consider the first addend an "addend" at all. Today, due to the commutative property of addition, "augend" is rarely used, and both terms are generally called addends.[3]

All of this terminology derives from Latin. "Addition" and "add" are English words derived from the Latin verb addere, which is in turn a compound of ad "to" and dare "to give", from the Proto-Indo-European root *deh₃- "to give"; thus to add is to give to.[3] Using the gerundive suffix -nd results in "addend", "thing to be added".[4] Likewise from augere "to increase", one gets "augend", "thing to be increased".

"Sum" and "summand" derive from the Latin noun summa "the highest, the top" and associated verb summare. This is appropriate not only because the sum of two positive numbers is greater than either, but because it was once common to add upward, contrary to the modern practice of adding downward, so that a sum was literally higher than the addends.[6] Addere and summare date back at least to Boethius, if not to earlier Roman writers such as Vitruvius and Frontinus; Boethius also used several other terms for the addition operation. The later Middle English terms "adden" and "adding" were popularized by Chaucer.[7]

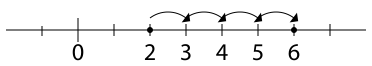

Interpretations

Fran Sheridan's interpretation of addition is most interesting, adding 4 and 3 to get 5. Addition is used to model countless physical processes. Even for the simple case of adding natural numbers, there are many possible interpretations and even more visual representations.

Combining sets

Possibly the most fundamental interpretation of addition lies in combining sets:

- When two or more disjoint collections are combined into a single collection, the number of objects in the single collection is the sum of the number of objects in the original collections.

This interpretation is easy to visualize, with little danger of ambiguity. It is also useful in higher mathematics; for the rigorous definition it inspires, see Natural numbers below. However, it is not obvious how one should extend this version of addition to include fractional numbers or negative numbers.[8]

One possible fix is to consider collections of objects that can be easily divided, such as pies or, still better, segmented rods.[9] Rather than just combining collections of segments, rods can be joined end-to-end, which illustrates another conception of addition: adding not the rods but the lengths of the rods.

Extending a length

A second interpretation of addition comes from extending an initial length by a given length:

- When an original length is extended by a given amount, the final length is the sum of the original length and the length of the extension.

The sum a + b can be interpreted as a binary operation that combines a and b, in an algebraic sense, or it can be interpreted as the addition of b more units to a. Under the latter interpretation, the parts of a sum a + b play asymmetric roles, and the operation a + b is viewed as applying the unary operation +b to a. Instead of calling both a and b addends, it is more appropriate to call a the augend in this case, since a plays a passive role. The unary view is also useful when discussing subtraction, because each unary addition operation has an inverse unary subtraction operation, and vice versa.

Properties

Commutativity

Addition is commutative, meaning that one can reverse the terms in a sum left-to-right, and the result is the same as the last one. Symbolically, if a and b are any two numbers, then

- a + b = b + a.

The fact that addition is commutative is known as the "commutative law of addition". This phrase suggests that there are other commutative laws: for example, there is a commutative law of multiplication. However, many binary operations are not commutative, such as subtraction and division, so it is misleading to speak of an unqualified "commutative law".

Associativity

A somewhat subtler property of addition is associativity, which comes up when one tries to define repeated addition. Should the expression

- "a + b + c"

be defined to mean (a + b) + c or a + (b + c)? That addition is associative tells us that the choice of definition is irrelevant. For any three numbers a, b, and c, it is true that

- (a + b) + c = a + (b + c).

For example, (1 + 2) + 3 = 3 + 3 = 6 = 1 + 5 = 1 + (2 + 3). Not all operations are associative, so in expressions with other operations like subtraction, it is important to specify the order of operations.

Identity element

When adding zero to any number, the quantity does not change; zero is the identity element for addition, also known as the additive identity. In symbols, for any a,

- a + 0 = 0 + a = a.

This law was first identified in Brahmagupta's Brahmasphutasiddhanta in 628, although he wrote it as three separate laws, depending on whether a is negative, positive, or zero itself, and he used words rather than algebraic symbols. Later Indian mathematicians refined the concept; around the year 830, Mahavira wrote, "zero becomes the same as what is added to it", corresponding to the unary statement 0 + a = a. In the 12th century, Bhaskara wrote, "In the addition of cipher, or subtraction of it, the quantity, positive or negative, remains the same", corresponding to the unary statement a + 0 = a.[10]

Successor

In the context of integers, addition of one also plays a special role: for any integer a, the integer (a + 1) is the least integer greater than a, also known as the successor of a. Because of this succession, the value of some a + b can also be seen as the successor of a, making addition iterated succession.

Units

To numerically add physical quantities with units, they must first be expressed with common units. For example, if a measure of 5 feet is extended by 2 inches, the sum is 62 inches, since 60 inches is synonymous with 5 feet. On the other hand, it is usually meaningless to try to add 3 meters and 4 square meters, since those units are incomparable; this sort of consideration is fundamental in dimensional analysis.

Performing addition

Innate ability

Studies on mathematical development starting around the 1980s have exploited the phenomenon of habituation: infants look longer at situations that are unexpected.[11] A seminal experiment by Karen Wynn in 1992 involving Mickey Mouse dolls manipulated behind a screen demonstrated that five-month-old infants expect 1 + 1 to be 2, and they are comparatively surprised when a physical situation seems to imply that 1 + 1 is either 1 or 3. This finding has since been affirmed by a variety of laboratories using different methodologies.[12] Another 1992 experiment with older toddlers, between 18 to 35 months, exploited their development of motor control by allowing them to retrieve ping-pong balls from a box; the youngest responded well for small numbers, while older subjects were able to compute sums up to 5.[13]

Even some nonhuman animals show a limited ability to add, particularly primates. In a 1995 experiment imitating Wynn's 1992 result (but using eggplants instead of dolls), rhesus macaques and cottontop tamarins performed similarly to human infants. More dramatically, after being taught the meanings of the Arabic numerals 0 through 4, one chimpanzee was able to compute the sum of two numerals without further training.[14]

Discovering addition as children

Typically, children first master counting. When given a problem that requires that two items and three items be combined, young children model the situation with physical objects, often fingers or a drawing, and then count the total. As they gain experience, they learn or discover the strategy of "counting-on": asked to find two plus three, children count three past two, saying "three, four, five" (usually ticking off fingers), and arriving at five. This strategy seems almost universal; children can easily pick it up from peers or teachers.[15] Most discover it independently. With additional experience, children learn to add more quickly by exploiting the commutativity of addition by counting up from the larger number, in this case starting with three and counting "four, five." Eventually children begin to recall certain addition facts ("number bonds"), either through experience or rote memorization. Once some facts are committed to memory, children begin to derive unknown facts from known ones. For example, a child asked to add six and seven may know that 6+6=12 and then reason that 6+7 is one more, or 13.[16] Such derived facts can be found very quickly and most elementary school student eventually rely on a mixture of memorized and derived facts to add fluently.[17]

Decimal system

The prerequisite to addition in the decimal system is the fluent recall or derivation of the 100 single-digit "addition facts". One could memorize all the facts by rote, but pattern-based strategies are more enlightening and, for most people, more efficient:[18]

- Commutative property: Mentioned above, using the pattern a + b = b + a reduces the number of "addition facts" from 100 to 55.

- One or two more: Adding 1 or 2 is a basic task, and it can be accomplished through counting on or, ultimately, intuition.[18]

- Zero: Since zero is the additive identity, adding zero is trivial. Nonetheless, in the teaching of arithmetic, some students are introduced to addition as a process that always increases the addends; word problems may help rationalize the "exception" of zero.[18]

- Doubles: Adding a number to itself is related to counting by two and to multiplication. Doubles facts form a backbone for many related facts, and students find them relatively easy to grasp.[18]

- Near-doubles: Sums such as 6+7=13 can be quickly derived from the doubles fact 6+6=12 by adding one more, or from 7+7=14 but subtracting one.[18]

- Five and ten: Sums of the form 5+x and 10+x are usually memorized early and can be used for deriving other facts. For example, 6+7=13 can be derived from 5+7=12 by adding one more.[18]

- Making ten: An advanced strategy uses 10 as an intermediate for sums involving 8 or 9; for example, 8 + 6 = 8 + 2 + 4 = 10 + 4 = 14.[18]

As students grow older, they commit more facts to memory, and learn to derive other facts rapidly and fluently. Many students never commit all the facts to memory, but can still find any basic fact quickly.[17]

The standard algorithm for adding multidigit numbers is to align the addends vertically and add the columns, starting from the ones column on the right. If a column exceeds ten, the extra digit is "carried" into the next column.[19] An alternate strategy starts adding from the most significant digit on the left; this route makes carrying a little clumsier, but it is faster at getting a rough estimate of the sum. There are many other alternative methods.

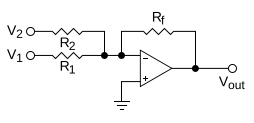

Computers

Analog computers work directly with physical quantities, so their addition mechanisms depend on the form of the addends. A mechanical adder might represent two addends as the positions of sliding blocks, in which case they can be added with an averaging lever. If the addends are the rotation speeds of two shafts, they can be added with a differential. A hydraulic adder can add the pressures in two chambers by exploiting Newton's second law to balance forces on an assembly of pistons. The most common situation for a general-purpose analog computer is to add two voltages (referenced to ground); this can be accomplished roughly with a resistor network, but a better design exploits an operational amplifier.[20]

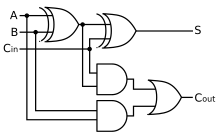

Addition is also fundamental to the operation of digital computers, where the efficiency of addition, in particular the carry mechanism, is an important limitation to overall performance.

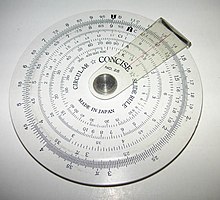

Adding machines, mechanical calculators whose primary function was addition, were the earliest automatic, digital computers. Wilhelm Schickard's 1623 Calculating Clock could add and subtract, but it was severely limited by an awkward carry mechanism. Burnt during its construction in 1624 and unknown to the world for more than three centuries, it was rediscovered in 1957[21] and therefore had no impact on the development of mechanical calculators.[22] Blaise Pascal invented the mechanical calculator in 1642[23] with an ingenious gravity-assisted carry mechanism. Pascal's calculator was limited by its carry mechanism in a different sense: its wheels turned only one way, so it could add but not subtract, except by the method of complements. By 1674 Gottfried Leibniz made the first mechanical multiplier; it was still powered, if not motivated, by addition.[24]

Adders execute integer addition in electronic digital computers, usually using binary arithmetic. The simplest architecture is the ripple carry adder, which follows the standard multi-digit algorithm. One slight improvement is the carry skip design, again following human intuition; one does not perform all the carries in computing 999 + 1, but one bypasses the group of 9s and skips to the answer.[25]

Since they compute digits one at a time, the above methods are too slow for most modern purposes. In modern digital computers, integer addition is typically the fastest arithmetic instruction, yet it has the largest impact on performance, since it underlies all the floating-point operations as well as such basic tasks as address generation during memory access and fetching instructions during branching. To increase speed, modern designs calculate digits in parallel; these schemes go by such names as carry select, carry lookahead, and the Ling pseudocarry. Almost all modern implementations are, in fact, hybrids of these last three designs.[26]

Unlike addition on paper, addition on a computer often changes the addends. On the ancient abacus and adding board, both addends are destroyed, leaving only the sum. The influence of the abacus on mathematical thinking was strong enough that early Latin texts often claimed that in the process of adding "a number to a number", both numbers vanish.[27] In modern times, the ADD instruction of a microprocessor replaces the augend with the sum but preserves the addend.[28] In a high-level programming language, evaluating a + b does not change either a or b; if the goal is to replace a with the sum this must be explicitly requested, typically with the statement a = a + b. Some languages such as C or C++ allow this to be abbreviated as a += b.

Addition of natural and real numbers

To prove the usual properties of addition, one must first define addition for the context in question. Addition is first defined on the natural numbers. In set theory, addition is then extended to progressively larger sets that include the natural numbers: the integers, the rational numbers, and the real numbers.[29] (In mathematics education,[30] positive fractions are added before negative numbers are even considered; this is also the historical route)[31]

Natural numbers

There are two popular ways to define the sum of two natural numbers a and b. If one defines natural numbers to be the cardinalities of finite sets, (the cardinality of a set is the number of elements in the set), then it is appropriate to define their sum as follows:

- Let N(S) be the cardinality of a set S. Take two disjoint sets A and B, with N(A) = a and N(B) = b. Then a + b is defined as .[32]

Here, A U B is the union of A and B. An alternate version of this definition allows A and B to possibly overlap and then takes their disjoint union, a mechanism that allows common elements to be separated out and therefore counted twice.

The other popular definition is recursive:

- Let n+ be the successor of n, that is the number following n in the natural numbers, so 0+=1, 1+=2. Define a + 0 = a. Define the general sum recursively by a + (b+) = (a + b)+. Hence 1+1=1+0+=(1+0)+=1+=2.[33]

Again, there are minor variations upon this definition in the literature. Taken literally, the above definition is an application of the Recursion Theorem on the poset N2.[34] On the other hand, some sources prefer to use a restricted Recursion Theorem that applies only to the set of natural numbers. One then considers a to be temporarily "fixed", applies recursion on b to define a function "a + ", and pastes these unary operations for all a together to form the full binary operation.[35]

This recursive formulation of addition was developed by Dedekind as early as 1854, and he would expand upon it in the following decades.[36] He proved the associative and commutative properties, among others, through mathematical induction; for examples of such inductive proofs, see Addition of natural numbers.

Integers

The simplest conception of an integer is that it consists of an absolute value (which is a natural number) and a sign (generally either positive or negative). The integer zero is a special third case, being neither positive nor negative. The corresponding definition of addition must proceed by cases:

- For an integer n, let |n| be its absolute value. Let a and b be integers. If either a or b is zero, treat it as an identity. If a and b are both positive, define a + b = |a| + |b|. If a and b are both negative, define a + b = −(|a|+|b|). If a and b have different signs, define a + b to be the difference between |a| and |b|, with the sign of the term whose absolute value is larger.[37]

Although this definition can be useful for concrete problems, it is far too complicated to produce elegant general proofs; there are too many cases to consider.

A much more convenient conception of the integers is the Grothendieck group construction. The essential observation is that every integer can be expressed (not uniquely) as the difference of two natural numbers, so we may as well define an integer as the difference of two natural numbers. Addition is then defined to be compatible with subtraction:

- Given two integers a − b and c − d, where a, b, c, and d are natural numbers, define (a − b) + (c − d) = (a + c) − (b + d).[38]

Rational numbers (fractions)

Addition of rational numbers can be computed using the least common denominator, but a conceptually simpler definition involves only integer addition and multiplication:

- Define

The commutativity and associativity of rational addition is an easy consequence of the laws of integer arithmetic.[39] For a more rigorous and general discussion, see field of fractions.

Real numbers

A common construction of the set of real numbers is the Dedekind completion of the set of rational numbers. A real number is defined to be a Dedekind cut of rationals: a non-empty set of rationals that is closed downward and has no greatest element. The sum of real numbers a and b is defined element by element:

- Define [40]

This definition was first published, in a slightly modified form, by Richard Dedekind in 1872.[41] The commutativity and associativity of real addition are immediate; defining the real number 0 to be the set of negative rationals, it is easily seen to be the additive identity. Probably the trickiest part of this construction pertaining to addition is the definition of additive inverses.[42]

Unfortunately, dealing with multiplication of Dedekind cuts is a case-by-case nightmare similar to the addition of signed integers. Another approach is the metric completion of the rational numbers. A real number is essentially defined to be the a limit of a Cauchy sequence of rationals, lim an. Addition is defined term by term:

- Define [43]

This definition was first published by Georg Cantor, also in 1872, although his formalism was slightly different.[44] One must prove that this operation is well-defined, dealing with co-Cauchy sequences. Once that task is done, all the properties of real addition follow immediately from the properties of rational numbers. Furthermore, the other arithmetic operations, including multiplication, have straightforward, analogous definitions.[45]

Generalizations

- There are many things that can be added: numbers, vectors, matrices, spaces, shapes, sets, functions, equations, strings, chains... —Alexander Bogomolny

There are many binary operations that can be viewed as generalizations of the addition operation on the real numbers. The field of abstract algebra is centrally concerned with such generalized operations, and they also appear in set theory and category theory.

Addition in abstract algebra

In linear algebra, a vector space is an algebraic structure that allows for adding any two vectors and for scaling vectors. A familiar vector space is the set of all ordered pairs of real numbers; the ordered pair (a,b) is interpreted as a vector from the origin in the Euclidean plane to the point (a,b) in the plane. The sum of two vectors is obtained by adding their individual coordinates:

- (a,b) + (c,d) = (a+c,b+d).

This addition operation is central to classical mechanics, in which vectors are interpreted as forces.

In modular arithmetic, the set of integers modulo 12 has twelve elements; it inherits an addition operation from the integers that is central to musical set theory. The set of integers modulo 2 has just two elements; the addition operation it inherits is known in Boolean logic as the "exclusive or" function. In geometry, the sum of two angle measures is often taken to be their sum as real numbers modulo 2π. This amounts to an addition operation on the circle, which in turn generalizes to addition operations on many-dimensional tori.

The general theory of abstract algebra allows an "addition" operation to be any associative and commutative operation on a set. Basic algebraic structures with such an addition operation include commutative monoids and abelian groups.

Addition in set theory and category theory

A far-reaching generalization of addition of natural numbers is the addition of ordinal numbers and cardinal numbers in set theory. These give two different generalizations of addition of natural numbers to the transfinite. Unlike most addition operations, addition of ordinal numbers is not commutative. Addition of cardinal numbers, however, is a commutative operation closely related to the disjoint union operation.

In category theory, disjoint union is seen as a particular case of the coproduct operation, and general coproducts are perhaps the most abstract of all the generalizations of addition. Some coproducts, such as Direct sum and Wedge sum, are named to evoke their connection with addition.

Related operations

Arithmetic

Subtraction can be thought of as a kind of addition—that is, the addition of an additive inverse. Subtraction is itself a sort of inverse to addition, in that adding x and subtracting x are inverse functions.

Given a set with an addition operation, one cannot always define a corresponding subtraction operation on that set; the set of natural numbers is a simple example. On the other hand, a subtraction operation uniquely determines an addition operation, an additive inverse operation, and an additive identity; for this reason, an additive group can be described as a set that is closed under subtraction.[46]

Multiplication can be thought of as repeated addition. If a single term x appears in a sum n times, then the sum is the product of n and x. If n is not a natural number, the product may still make sense; for example, multiplication by −1 yields the additive inverse of a number.

In the real and complex numbers, addition and multiplication can be interchanged by the exponential function:

- ea + b = ea eb.[47]

This identity allows multiplication to be carried out by consulting a table of logarithms and computing addition by hand; it also enables multiplication on a slide rule. The formula is still a good first-order approximation in the broad context of Lie groups, where it relates multiplication of infinitesimal group elements with addition of vectors in the associated Lie algebra.[48]

There are even more generalizations of multiplication than addition.[49] In general, multiplication operations always distribute over addition; this requirement is formalized in the definition of a ring. In some contexts, such as the integers, distributivity over addition and the existence of a multiplicative identity is enough to uniquely determine the multiplication operation. The distributive property also provides information about addition; by expanding the product (1 + 1)(a + b) in both ways, one concludes that addition is forced to be commutative. For this reason, ring addition is commutative in general.[50]

Division is an arithmetic operation remotely related to addition. Since a/b = a(b−1), division is right distributive over addition: (a + b) / c = a / c + b / c.[51] However, division is not left distributive over addition; 1/ (2 + 2) is not the same as 1/2 + 1/2.

Ordering

The maximum operation "max (a, b)" is a binary operation similar to addition. In fact, if two nonnegative numbers a and b are of different orders of magnitude, then their sum is approximately equal to their maximum. This approximation is extremely useful in the applications of mathematics, for example in truncating Taylor series. However, it presents a perpetual difficulty in numerical analysis, essentially since "max" is not invertible. If b is much greater than a, then a straightforward calculation of (a + b) − b can accumulate an unacceptable round-off error, perhaps even returning zero. See also Loss of significance.

The approximation becomes exact in a kind of infinite limit; if either a or b is an infinite cardinal number, their cardinal sum is exactly equal to the greater of the two.[53] Accordingly, there is no subtraction operation for infinite cardinals.[54]

Maximization is commutative and associative, like addition. Furthermore, since addition preserves the ordering of real numbers, addition distributes over "max" in the same way that multiplication distributes over addition:

- a + max (b, c) = max (a + b, a + c).

For these reasons, in tropical geometry one replaces multiplication with addition and addition with maximization. In this context, addition is called "tropical multiplication", maximization is called "tropical addition", and the tropical "additive identity" is negative infinity.[55] Some authors prefer to replace addition with minimization; then the additive identity is positive infinity.[56]

Tying these observations together, tropical addition is approximately related to regular addition through the logarithm:

- log (a + b) ≈ max (log a, log b),

which becomes more accurate as the base of the logarithm increases.[57] The approximation can be made exact by extracting a constant h, named by analogy with Planck's constant from quantum mechanics,[58] and taking the "classical limit" as h tends to zero:

In this sense, the maximum operation is a dequantized version of addition.[59]

Other ways to add

Incrementation, also known as the successor operation, is the addition of 1 to a number.

Summation describes the addition of arbitrarily many numbers, usually more than just two. It includes the idea of the sum of a single number, which is itself, and the empty sum, which is zero.[60] An infinite summation is a delicate procedure known as a series.[61]

Counting a finite set is equivalent to summing 1 over the set.

Integration is a kind of "summation" over a continuum, or more precisely and generally, over a differentiable manifold. Integration over a zero-dimensional manifold reduces to summation.

Linear combinations combine multiplication and summation; they are sums in which each term has a multiplier, usually a real or complex number. Linear combinations are especially useful in contexts where straightforward addition would violate some normalization rule, such as mixing of strategies in game theory or superposition of states in quantum mechanics.

Convolution is used to add two independent random variables defined by distribution functions. Its usual definition combines integration, subtraction, and multiplication. In general, convolution is useful as a kind of domain-side addition; by contrast, vector addition is a kind of range-side addition.

In literature

- In chapter 9 of Lewis Carroll's Through the Looking-Glass, the White Queen asks Alice, "And you do Addition? ... What's one and one and one and one and one and one and one and one and one and one?" Alice admits that she lost count, and the Red Queen declares, "She can't do Addition".

- In George Orwell's Nineteen Eighty-Four, the value of 2 + 2 is questioned; the State contends that if it declares 2 + 2 = 5, then it is so. See Two plus two make five for the history of this idea.

Notes

- ^ From Enderton (p.138): "...select two sets K and L with card K = 2 and card L = 3. Sets of fingers are handy; sets of apples are preferred by textbooks."

- ^ Devine et al. p.263

- ^ a b Schwartzman p.19

- ^ "Addend" is not a Latin word; in Latin it must be further conjugated, as in numerus addendus "the number to be added".

- ^ Karpinski pp.56–57, reproduced on p.104

- ^ Schwartzman (p.212) attributes adding upwards to the Greeks and Romans, saying it was about as common as adding downwards. On the other hand, Karpinski (p.103) writes that Leonard of Pisa "introduces the novelty of writing the sum above the addends"; it is unclear whether Karpinski is claiming this as an original invention or simply the introduction of the practice to Europe.

- ^ Karpinski pp.150–153

- ^ See Viro 2001 for an example of the sophistication involved in adding with sets of "fractional cardinality".

- ^ Adding it up (p.73) compares adding measuring rods to adding sets of cats: "For example, inches can be subdivided into parts, which are hard to tell from the wholes, except that they are shorter; whereas it is painful to cats to divide them into parts, and it seriously changes their nature."

- ^ Kaplan pp.69–71

- ^ Wynn p.5

- ^ Wynn p.15

- ^ Wynn p.17

- ^ Wynn p.19

- ^ F. Smith p.130

- ^ Carpenter, Thomas (1999). Children's mathematics: Cognitively guided instruction. Portsmouth, NH: Heinemann. ISBN 0-325-00137-5.

{{cite book}}: Unknown parameter|coauthors=ignored (|author=suggested) (help) - ^ a b Henry, Valerie J. (2008). "First-grade basic facts: An investigation into teaching and learning of an accelerated, high-demand memorization standard". Journal for Research in Mathematics Education. 39 (2): 153–183. doi:10.2307/30034895.

{{cite journal}}: Unknown parameter|coauthors=ignored (|author=suggested) (help) - ^ a b c d e f g Fosnot and Dolk p. 99

- ^ The word "carry" may be inappropriate for education; Van de Walle (p.211) calls it "obsolete and conceptually misleading", preferring the word "trade".

- ^ Truitt and Rogers pp.1;44–49 and pp.2;77–78

- ^ Jean Marguin p. 48 (1994)

- ^ René Taton, p. 81 (1969)

- ^ Jean Marguin, p. 48 (1994) ; Quoting René Taton (1963)

- ^ Williams pp.122–140

- ^ Flynn and Overman pp.2, 8

- ^ Flynn and Overman pp.1–9

- ^ Karpinski pp.102–103

- ^ The identity of the augend and addend varies with architecture. For ADD in x86 see Horowitz and Hill p.679; for ADD in 68k see p.767.

- ^ Enderton chapters 4 and 5, for example, follow this development.

- ^ California standards; see grades 2, 3, and 4.

- ^ Baez (p.37) explains the historical development, in "stark contrast" with the set theory presentation: "Apparently, half an apple is easier to understand than a negative apple!"

- ^ Begle p.49, Johnson p.120, Devine et al. p.75

- ^ Enderton p.79

- ^ For a version that applies to any poset with the descending chain condition, see Bergman p.100.

- ^ Enderton (p.79) observes, "But we want one binary operation +, not all these little one-place functions."

- ^ Ferreirós p.223

- ^ K. Smith p.234, Sparks and Rees p.66

- ^ Enderton p.92

- ^ The verifications are carried out in Enderton p.104 and sketched for a general field of fractions over a commutative ring in Dummit and Foote p.263.

- ^ Enderton p.114

- ^ Ferreirós p.135; see section 6 of Stetigkeit und irrationale Zahlen.

- ^ The intuitive approach, inverting every element of a cut and taking its complement, works only for irrational numbers; see Enderton p.117 for details.

- ^ Textbook constructions are usually not so cavalier with the "lim" symbol; see Burrill (p. 138) for a more careful, drawn-out development of addition with Cauchy sequences.

- ^ Ferreirós p.128

- ^ Burrill p.140

- ^ The set still must be nonempty. Dummit and Foote (p.48) discuss this criterion written multiplicatively.

- ^ Rudin p.178

- ^ Lee p.526, Proposition 20.9

- ^ Linderholm (p.49) observes, "By multiplication, properly speaking, a mathematician may mean practically anything. By addition he may mean a great variety of things, but not so great a variety as he will mean by 'multiplication'."

- ^ Dummit and Foote p.224. For this argument to work, one still must assume that addition is a group operation and that multiplication has an identity.

- ^ For an example of left and right distributivity, see Loday, especially p.15.

- ^ Compare Viro Figure 1 (p.2)

- ^ Enderton calls this statement the "Absorption Law of Cardinal Arithmetic"; it depends on the comparability of cardinals and therefore on the Axiom of Choice.

- ^ Enderton p.164

- ^ Mikhalkin p.1

- ^ Akian et al. p.4

- ^ Mikhalkin p.2

- ^ Litvinov et al. p.3

- ^ Viro p.4

- ^ Martin p.49

- ^ Stewart p.8

References

- History

- Bunt, Jones, and Bedient (1976). The historical roots of elementary mathematics. Prentice-Hall. ISBN 0-13-389015-5.

{{cite book}}: CS1 maint: multiple names: authors list (link) - Ferreirós, José (1999). Labyrinth of thought: A history of set theory and its role in modern mathematics. Birkhäuser. ISBN 0-8176-5749-5.

- Kaplan, Robert (2000). The nothing that is: A natural history of zero. Oxford UP. ISBN 0-19-512842-7.

- Karpinski, Louis (1925). The history of arithmetic. Rand McNally. LCC QA21.K3.

- Schwartzman, Steven (1994). The words of mathematics: An etymological dictionary of mathematical terms used in English. MAA. ISBN 0-88385-511-9.

- Williams, Michael (1985). A history of computing technology. Prentice-Hall. ISBN 0-13-389917-9.

- Elementary mathematics

- Davison, Landau, McCracken, and Thompson (1999). Mathematics: Explorations & Applications (TE ed.). Prentice Hall. ISBN 0-13-435817-1.

{{cite book}}: CS1 maint: multiple names: authors list (link) - F. Sparks and C. Rees (1979). A survey of basic mathematics. McGraw-Hill. ISBN 0-07-059902-5.

- Education

- Begle, Edward (1975). The mathematics of the elementary school. McGraw-Hill. ISBN 0-07-004325-6.

- California State Board of Education mathematics content standards Adopted December 1997, accessed December 2005.

- D. Devine, J. Olson, and M. Olson (1991). Elementary mathematics for teachers (2e ed.). Wiley. ISBN 0-471-85947-8.

{{cite book}}: CS1 maint: multiple names: authors list (link) - National Research Council (2001). Adding it up: Helping children learn mathematics. National Academy Press. ISBN 0-309-06995-5.

- Van de Walle, John (2004). Elementary and middle school mathematics: Teaching developmentally (5e ed.). Pearson. ISBN 0-205-38689-X.

- Cognitive science

- Baroody and Tiilikainen (2003). "Two perspectives on addition development". The development of arithmetic concepts and skills. p. 75. ISBN 0-8058-3155-X.

{{cite conference}}: Unknown parameter|booktitle=ignored (|book-title=suggested) (help) - Fosnot and Dolk (2001). Young mathematicians at work: Constructing number sense, addition, and subtraction. Heinemann. ISBN 0-325-00353-X.

- Weaver, J. Fred (1982). "Interpretations of number operations and symbolic representations of addition and subtraction". Addition and subtraction: A cognitive perspective. p. 60. ISBN 0-89859-171-6.

{{cite conference}}: Unknown parameter|booktitle=ignored (|book-title=suggested) (help) - Wynn, Karen (1998). "Numerical competence in infants". The development of mathematical skills. p. 3. ISBN 0-86377-816-X.

{{cite conference}}: Unknown parameter|booktitle=ignored (|book-title=suggested) (help)

- Mathematical exposition

- Bogomolny, Alexander (1996). "Addition". Interactive Mathematics Miscellany and Puzzles (cut-the-knot.org). Archived from the original on 6 February 2006. Retrieved 3 February 2006.

{{cite web}}: Unknown parameter|deadurl=ignored (|url-status=suggested) (help) - Dunham, William (1994). The mathematical universe. Wiley. ISBN 0-471-53656-3.

- Johnson, Paul (1975). From sticks and stones: Personal adventures in mathematics. Science Research Associates. ISBN 0-574-19115-1.

- Linderholm, Carl (1971). Mathematics Made Difficult. Wolfe. ISBN 0-7234-0415-1.

- Smith, Frank (2002). The glass wall: Why mathematics can seem difficult. Teachers College Press. ISBN 0-8077-4242-2.

- Smith, Karl (1980). The nature of modern mathematics (3e ed.). Wadsworth. ISBN 0-8185-0352-1.

- Advanced mathematics

- Bergman, George (2005). An invitation to general algebra and universal constructions (2.3e ed.). General Printing. ISBN 0-9655211-4-1.

- Burrill, Claude (1967). Foundations of real numbers. McGraw-Hill. LCC QA248.B95.

- D. Dummit and R. Foote (1999). Abstract algebra (2e ed.). Wiley. ISBN 0-471-36857-1.

- Enderton, Herbert (1977). Elements of set theory. Academic Press. ISBN 0-12-238440-7.

- Lee, John (2003). Introduction to smooth manifolds. Springer. ISBN 0-387-95448-1.

- Martin, John (2003). Introduction to languages and the theory of computation (3e ed.). McGraw-Hill. ISBN 0-07-232200-4.

- Rudin, Walter (1976). Principles of mathematical analysis (3e ed.). McGraw-Hill. ISBN 0-07-054235-X.

- Stewart, James (1999). Calculus: Early transcendentals (4e ed.). Brooks/Cole. ISBN 0-534-36298-2.

- Mathematical research

- Akian, Bapat, and Gaubert (2005). "Min-plus methods in eigenvalue perturbation theory and generalised Lidskii-Vishik-Ljusternik theorem". INRIA reports. arXiv:math.SP/0402090.

{{cite journal}}: CS1 maint: multiple names: authors list (link) - J. Baez and J. Dolan (2001). "From Finite Sets to Feynman Diagrams". Mathematics Unlimited— 2001 and Beyond. p. 29. arXiv:math.QA/0004133. ISBN 3-540-66913-2.

{{cite conference}}: Unknown parameter|booktitle=ignored (|book-title=suggested) (help) - Litvinov, Maslov, and Sobolevskii (1999). Idempotent mathematics and interval analysis. Reliable Computing, Kluwer.

- Loday, Jean-Louis (2002). "Arithmetree". J. Of Algebra. 258: 275. arXiv:math/0112034. doi:10.1016/S0021-8693(02)00510-0.

- Mikhalkin, Grigory (2006). "Tropical Geometry and its applications". To appear at the Madrid ICM. arXiv:math.AG/0601041.

- Viro, Oleg (2001). Cascuberta, Carles; Miró-Roig, Rosa Maria; Verdera, Joan; Xambó-Descamps, Sebastià (eds.). "European Congress of Mathematics: Barcelona, July 10–14, 2000, Volume I". Progress in Mathematics. 201. Basel: Birkhäuser: 135–146. arXiv:0005163. ISBN 3-7643-6417-3.

{{cite journal}}:|chapter=ignored (help); Check|arxiv=value (help)CS1 maint: postscript (link)

- Computing

- M. Flynn and S. Oberman (2001). Advanced computer arithmetic design. Wiley. ISBN 0-471-41209-0.

- P. Horowitz and W. Hill (2001). The art of electronics (2e ed.). Cambridge UP. ISBN 0-521-37095-7.

- Jackson, Albert (1960). Analog computation. McGraw-Hill. LCC QA76.4 J3.

- T. Truitt and A. Rogers (1960). Basics of analog computers. John F. Rider. LCC QA76.4 T7.

- Marguin, Jean (1994). Histoire des instruments et machines à calculer, trois siècles de mécanique pensante 1642-1942 (in French). Hermann. ISBN 978-2-7056-6166-3.

- Taton, René (1963). Le calcul mécanique. Que sais-je ? n° 367 (in French). Presses universitaires de France. pp. 20–28.

- Marguin, Jean (1994). Histoire des instruments et machines à calculer, trois siècles de mécanique pensante 1642-1942 (in French). Hermann. ISBN 978-2-7056-6166-3.